Introduction

The era of chatbots forgetting everything after a conversation is coming to an end with a new feature being introduced.

Recently, multiple sources revealed that Anthropic is making significant updates to Claude Cowork by developing Knowledge Bases to enable “permanent memory.”

This marks a transformation in how users interact with Claude, shifting from the traditional single-session context retention to a model that allows for continuous access to key information across multiple conversations and tasks.

With this feature, AI could potentially understand the relationship between current tasks and past work even days or weeks later. This also signals a shift from AI being merely a conversational assistant to becoming a task partner.

Claude Cowork’s Focus

Unlike Claude Chat, which leans towards general conversation, Claude Cowork is more aligned with collaborative work environments, focusing on knowledge-intensive tasks such as writing, research, planning, and document processing.

According to relevant information, Claude Cowork aims to become a more universal, efficiency-oriented work entry point, enabling chat mode as a primary feature rather than a separate section.

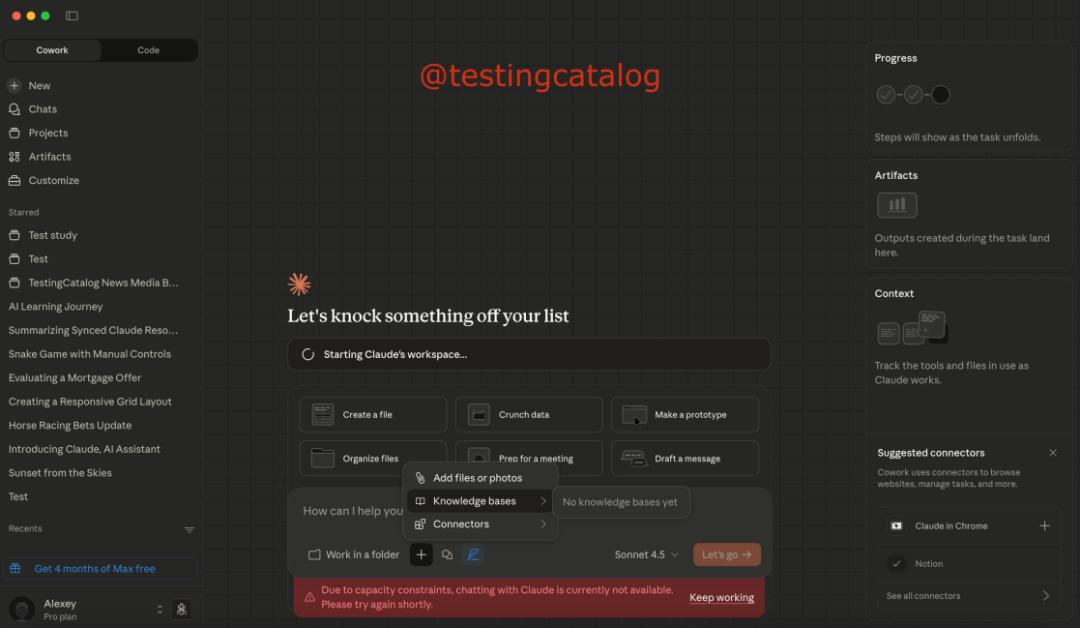

The user interface has also been simplified, with a new section for Artefacts added to the right sidebar. Previously, conversations with chatbots would reset, but now users can manage and reuse past works in this section. If using Claude used to feel like a Q&A session, it now resembles co-creating related projects with Claude.

Knowledge Bases and Dynamic Information

A key aspect of the new Cowork feature is the introduction of Knowledge Bases, described as “persistent repositories.” Claude can reference these Knowledge Bases to retrieve relevant contextual information and gradually update user preferences, decisions, facts, or lessons learned.

This means that the knowledge system supporting Claude is no longer static but rather a more flexible dynamic knowledge base. It no longer relies solely on the limited context of a single conversation but organizes information into multiple categorized Knowledge Bases.

This allows users to select specific Knowledge Bases as contextual attachments when handling related tasks in Cowork, which is particularly important for workflows involving automation and document management.

With this functionality, Claude is expected to handle more complex tasks reliably instead of relying on the limited context of a single conversation for inference.

Enhanced Automation Capabilities

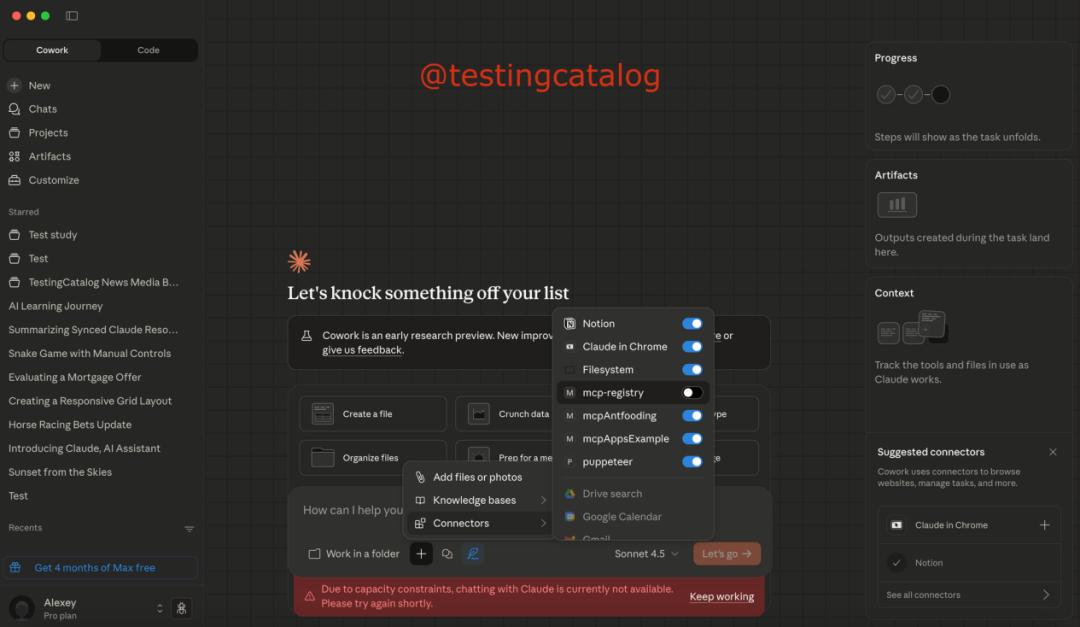

An exciting update is the expanded MCP connector system, which could significantly enhance Cowork’s automation capabilities. The reference to the MCP registry suggests that Claude may dynamically manage and operate multiple remote connectors and potentially install approved modules as needed for task completion.

This indicates that Claude Cowork has evolved beyond merely generating ideas and writing copy to a higher stage where it actively operates systems and calls tools.

Lightweight Features and User Accessibility

In addition to key feature updates, lightweight features are also being developed. Tibor Blaho recently announced on social media that Claude’s web interface is being upgraded to include a web voice mode, which will greatly enhance its accessibility and usability.

At the same time, Claude has improved the previously announced Pixelate feature (which allows users to convert images into pixel art avatars), enabling it to generate higher-quality results and extending it to desktop applications.

These updates collectively indicate that Anthropic is evolving Claude from a standard chat assistant into a more comprehensive productivity assistant, focusing on modular knowledge management, automation, and multimodal input options.

Strategic Alignment and Community Response

This aligns closely with Anthropic’s overall strategy to integrate traditional chat, knowledge bases, and intelligent agent functionalities into a unified interface, embedding Claude more deeply into daily workflows.

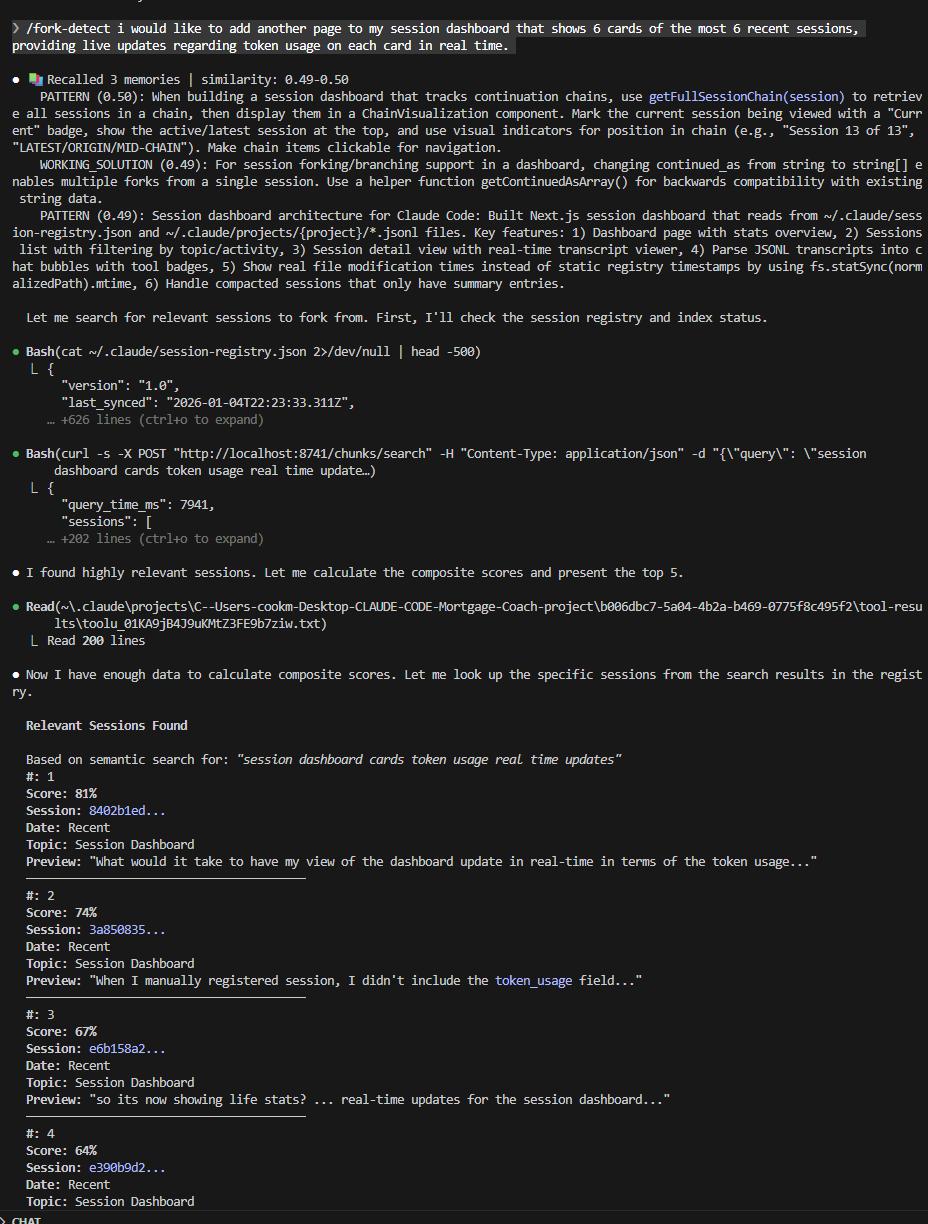

The updates to Claude Cowork have sparked significant attention and discussion in the field, with rapid responses from the developer community. Developer Zac shared on social media that he has implemented Smart Forking on existing tools, providing practical examples of the feasibility of long-term memory in large models. He strongly recommends all Claude Code users integrate this feature into their workflows.

Users can now say goodbye to repeatedly explaining their needs to chatbots. Unlike the organized memory integration in Claude Cowork, Smart Forking is based on embedding vector databases from Claude Code sessions, allowing retrieval of the most relevant context from historical conversations for current tasks.

According to this developer, the functionality is straightforward: invoke the /fork-detect tool and specify what you want to do. It will input your prompt into an embedding model and cross-reference the results with a vectorized RAG database containing all chat records, which updates automatically as you chat.

It then returns the five chat records most relevant to the desired action, assigning a relevance score from high to low for each record. Users can choose to retrieve forked records, providing a fork command that can be copied and pasted into a new terminal.

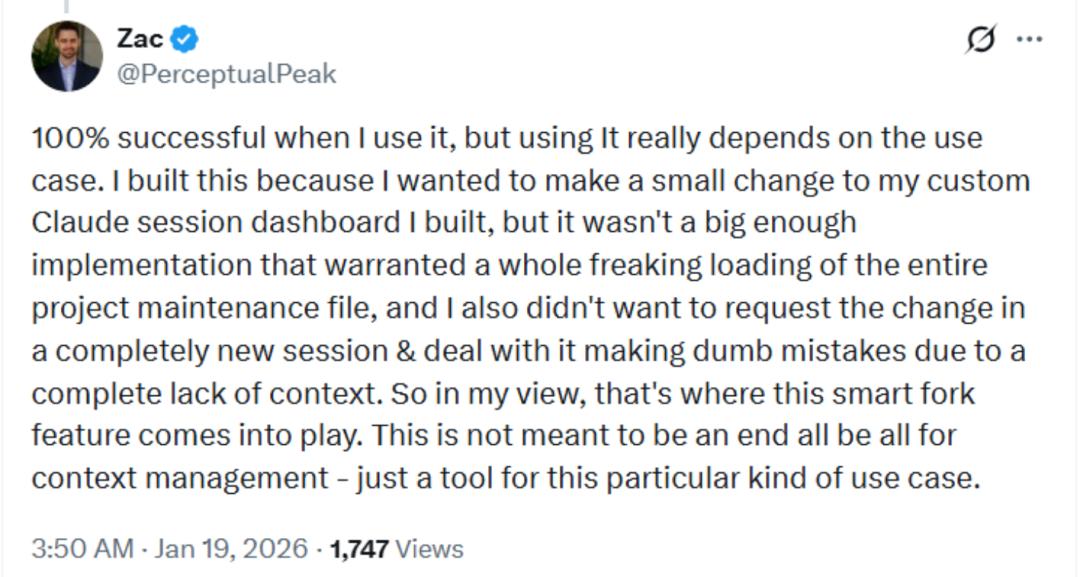

Zac reports a 100% success rate when using this feature, though its effectiveness depends on the specific use case. It is not the ultimate solution for context management but serves as a tool for this particular scenario.

Conclusion

Overall, whether through the evolution of Anthropic’s product features or the proactive exploration by the developer community, a common trend emerges: as use cases deepen, long-term memory is becoming a critical foundational capability in AI collaboration tools.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.